Ossama Ahmed

Senior Robotics Research Engineer

Nvidia

About Me

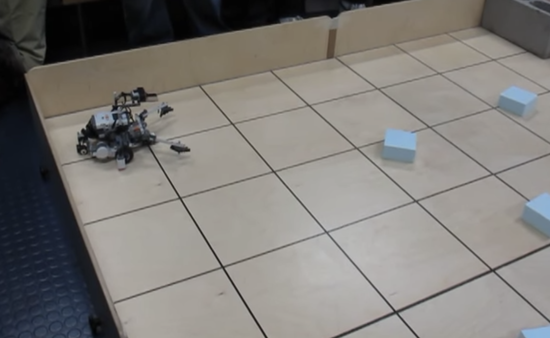

I am currently a Senior Robotics Research Engineer at Nvidia. I am interested in all topics surrounding Machine Learning, Perception and Robotics. Previously I obtained a MSc in Robotics, Systems and Controls at ETH Zurich; and before that I worked at Qualcomm with the SNPE SDK team after finishing my undergrad in Software Engineering at McGill.

Interests

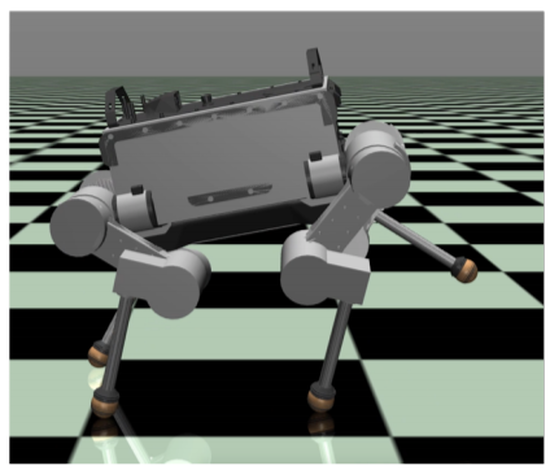

- Machine Learning

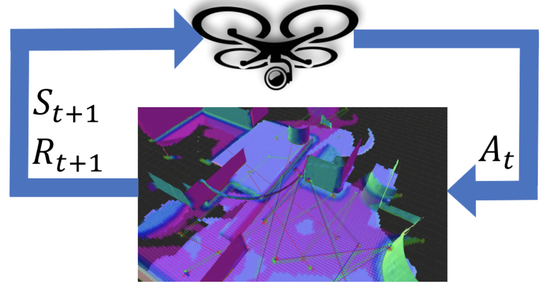

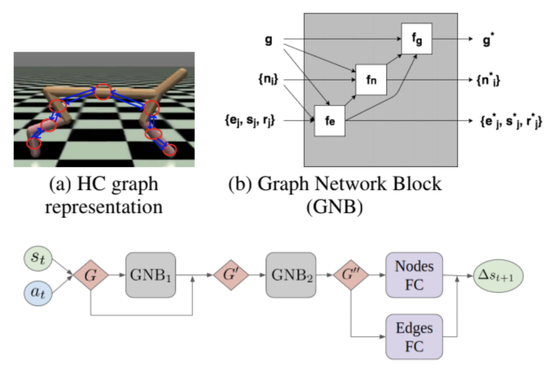

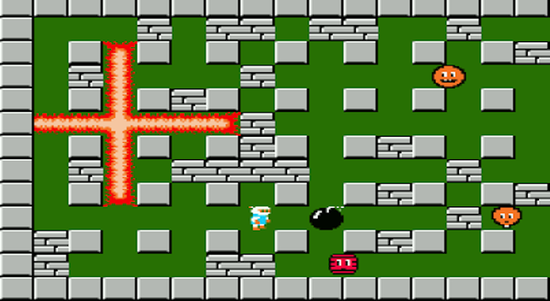

- Reinforcement Learning

- Robotics

- Sim2Real

- Motion Planning

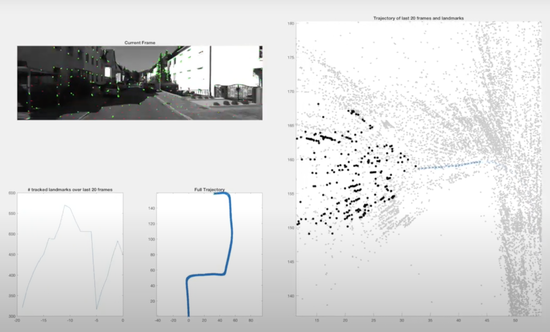

- Perception

- Transfer Learning

Education

-

MSc in Robotics, Systems and Control, 2020

ETH Zürich

-

BEng in Software Engineering, 2016

McGill University